Organizations managing enormous amounts of data in the data-driven environment of today need a strong, scalable, reasonably priced storage solution. Now enter the technology meant to transform data storage and processing: the Hadoop Distributed File System (HDFS). Businesses seeking to fully utilize big data are looking to Hadoop Engineers, who specialize in creating and running these complex systems, more and more But when the best talent can be obtained remotely, building an in-house team for this can be costly, time-consuming, and frequently pointless.

The Power of HDFS: A Game Changer for Big Data

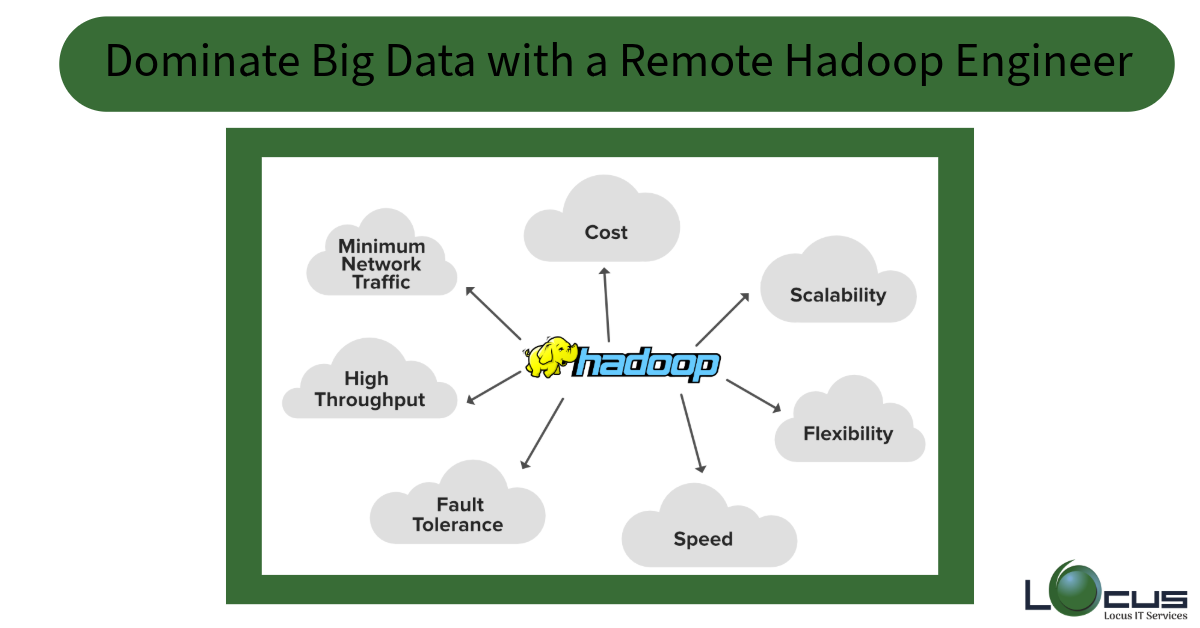

Core element of the Hadoop ecosystem, the Hadoop Distributed File System offers a scalable, fault-tolerant, high-throughput data storage system. For companies where data is the backbone—finance, healthcare, retail, and telecommunications, among others—HDFS is perfect since it is made to efficiently manage vast volumes.

But using HDFS calls for advanced knowledge in security, cluster management, and distributed computing. Here is where employing a remote Hadoop Engineer starts to be strategically advantageous. Without the overhead of running an in-house team, a highly qualified professional can easily include HDFS into your current infrastructure, maximize performance, and guarantee data security.

Why Companies Are Opting to Hire Remote Hadoop Engineers

Companies in many different fields understand the clear advantages of using a worldwide pool of talent. Hiring a remote Hadoop Engineer is the wise decision your business should make for several reasons.

Entry to Top Global Talent

Not always found within a 50-mile radius of your office are the best engineers. Remote hiring guarantees that your business gains from innovative knowledge free from geographical limitations by allowing you access to the best Hadoop Engineers from all around the world.

Cost Effectiveness Without Sacering Standards

Creating an internal Hadoop team calls for workspace, training, and infrastructure investment. Working from their own environments, remote engineers eliminate overhead expenses and provide the same degree of excellence.

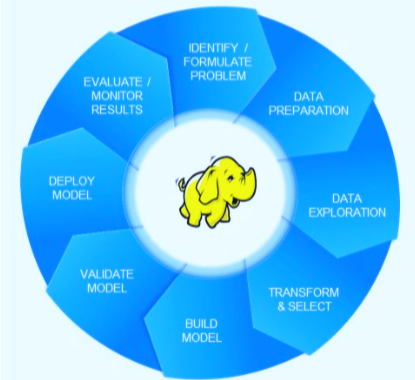

Quick application of Big Data solutions

Implementing HDFS calls both strategic and exacting behavior. Reducing deployment time and speeding the time-to- value for your big data projects, a competent Hadoop Engineer can quickly design, implement, and optimize HDFS clusters.

Flexibility and scalability

Your tech staff should change with the times since business needs change. By scaling your Hadoop knowledge up or down depending on your project needs, remote hiring guarantees operational flexibility and agility.

Around-the-Clock Support and Activities

Companies can guarantee that their data infrastructure is watched over and optimized around-the-clock by using a remote team spanning many time zones. Businesses depending on real-time data processing especially need this.

Key Skills to Look for When Hiring a Remote Hadoop Engineer

Searching for the perfect Hadoop Engineer must take technical proficiency, problem-solving capacity, and adaptability into great account. The following are some basic competencies to give top priority:

- Mastery of HDFS: thorough understanding of Hadoop’s storage architecture including block size configuration, NameNode management, and data replication.

- Big Data Ecosystem Proficiency: Practical expertise with Apache Hive, MapReduce, Apache Spark, and HBase connected technologies including Hadoop.

- Experience HDFS deployment in cloud systems including AWS, Azure, or Google Cloud.

- Knowing Kerberos authentication, encryption, and governance structures will help one to guarantee data integrity and security.

- Capacity to control cluster performance, allocate resources effectively, and lower latency helps to optimize everything.

- Programming and scripting call for mastery of Java, Python, or Scala for data processing applications.

- Capacity to troubleshoot system failures, maximize workflows, and improve storage efficiency defines your problem-solving attitude.

Future-Proof Your Business with the Right Talent

In the digital environment of today, an organization’s competitive edge mostly depends on its capacity to properly exploit big data. Modern data architecture is mostly based on HDFS, but only with the correct knowledge will its full potential be released. Selecting to engage a remote Hadoop Engineer guarantees that your business remains ahead of the curve by using innovative technologies free from the logistical constraints of internal hiring.

Invest in the correct talent now to equip your company with scalable, safe, effective data solutions promoting corporate development. Big data is here; ensure your business is ready to lead the way!

Power your data strategy with Locus IT—Hire expert Remote Hadoop Engineers for seamless scalability and performance.